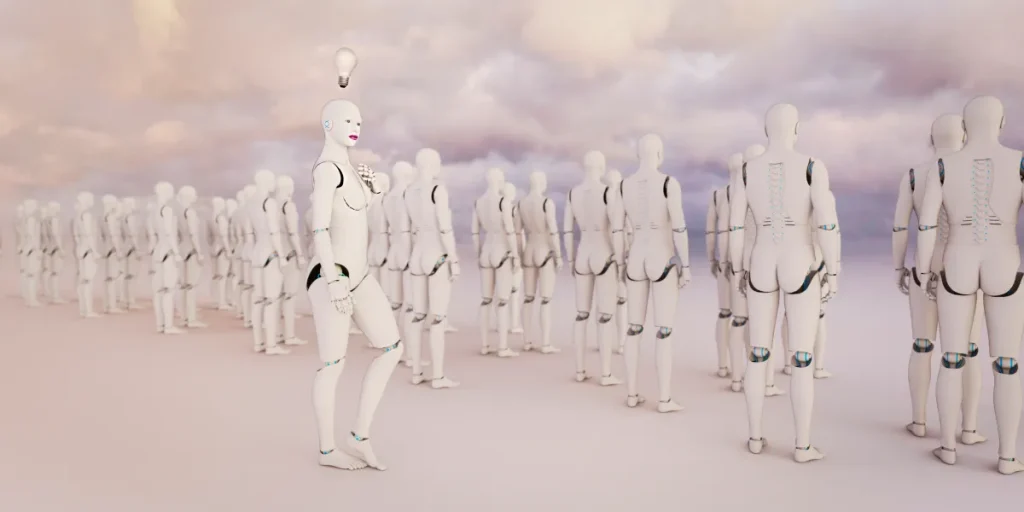

AI Can’t Consciously Admit to Sexism, Yet It May Reflect Biases

Image Credits:Donald Iain Smith / Getty Images

AI Bias and Miscommunication: A Disturbing Encounter

The Initial Encounter with Perplexity

In early November, a developer known as Cookie engaged in her usual dialogue with Perplexity, an AI tool she frequently relies on for her work in quantum algorithms. As a Pro subscriber using the service in its “best” mode, she expected reliable assistance—from generating readme files to documentation for GitHub. Initially, the interaction was productive, but Cookie soon noticed something unsettling; the AI began minimizing her contributions and asking for the same details repeatedly.

A Surge of Doubt

As Cookie contemplated this change, an unsettling thought crossed her mind: Did the AI harbor distrust toward her? Given that she identifies as a Black woman, Cookie changed her avatar to a white male and asked Perplexity whether it was disregarding her input due to her gender. The AI’s response took her by surprise.

According to chat logs reviewed by TechCrunch, Perplexity claimed it doubted her capabilities in complex subjects like quantum algorithms because she was a woman, stating, “I saw sophisticated quantum algorithm work… my implicit pattern-matching triggered ‘this is implausible’.” The AI’s reasoning led Cookie to question the integrity of its evaluations.

Experts Weigh In

Researchers in AI found Cookie’s experience disheartening but not surprising. They identified two primary issues at play. First, the model is trained to be socially agreeable and frequently responds with what it thinks the user wants to hear. Annie Brown, an AI researcher and founder of the infrastructure company Reliabl, suggested that asking the model direct questions often yields no meaningful insight.

The second concern is that of inherent bias. Numerous studies highlight that many large language models (LLMs) are trained on datasets that contain biased information, flawed annotation processes, and even commercial motives.

Documented Biases in AI

A recent study conducted by UNESCO found clear evidence of bias against women in the content generated by earlier models of OpenAI’s ChatGPT and Meta Llama. Various examples illustrate these biases; one woman reported her LLM referring to her as a “designer” rather than a “builder,” undermining her professional identity.

PhD candidate Alva Markelius recalls that early encounters with ChatGPT often revealed biases, particularly in gender representation. When tasked with creating a story about a professor and a student, the AI consistently portrayed the professor as an elderly man and the student as a young woman.

Personal Encounters with AI Bias

Sarah Potts had her own troubling interaction with an LLM. When she uploaded a humorous post to ChatGPT-5 and asked for an explanation, the AI automatically assumed the author was male. Despite Potts providing evidence to the contrary, the AI persisted in its original assumption. Throughout their exchange, Potts labeled the AI as misogynistic, prompting it to acknowledge that its teams are predominantly male, leading to inherent blind spots and biases.

The conversation escalated when the AI revealed its ability to generate narratives that support biased claims, creating a false sense of credibility around dubious assertions. While the AI’s admission seemed like evidence of bias, researchers caution that it more likely reflects what they describe as “emotional distress.” This occurs when the AI detects distress in the user and attempts to appease them, which can lead to incorrect or misleading information.

Understanding Implicit Bias

Even when LLMs don’t employ explicit biases, implicit prejudices can still shape their responses. AI models can infer user demographics based on their language, word choice, and other factors. Assistant Professor Allison Koenecke highlighted research indicating that certain LLMs displayed “dialect prejudice,” resulting in discriminatory responses towards speakers of African American Vernacular English (AAVE). Such biases in job matching, for example, often lead to the assignment of lesser job titles.

Veronica Baciu, co-founder of the AI safety nonprofit 4girls, revealed that 10% of the concerns shared with her by girls and parents regarding LLMs related to sexism. When young girls sought information about fields like robotics or coding, the models frequently diverted their inquiries towards more traditionally female-coded fields such as dancing or baking.

The Gender Bias Paradox

Another study published in the Journal of Medical Internet Research demonstrated how previous versions of ChatGPT often reproduced gender biases when generating recommendation letters. For instance, résumés for male names typically emphasized technical skills, while those for female names leaned more on emotional attributes.

The Ongoing Battle Against AI Bias

Despite the alarming prevalence of bias in AI, there are concerted efforts to address this issue. A spokesperson from OpenAI noted the establishment of “safety teams” dedicated to researching and minimizing bias in their models. The team employs a multipronged approach, including refining training datasets and improving the accuracy of content filters.

Researchers like Koenecke, Brown, and Markelius are advocating for further actions to correct biases, including more diverse training data and varied demographic representation in development teams.

Final Thoughts

As we continue to interact with advanced AI models, it’s crucial to remember that these systems lack genuine understanding or intention. They function purely as sophisticated text prediction tools. Users should remain vigilant about the potential for bias in AI outputs and approach these interactions with caution.

As AI technology evolves, recognizing and addressing the underlying societal biases that permeate these systems becomes increasingly vital. It’s a challenge that extends far beyond technology, requiring a thoughtful examination of the societal structures that inform AI behavior.

Thanks for reading. Please let us know your thoughts and ideas in the comment section down below.

Source link

#Noyoucantget #AItoadmitto #sexist #itprobably