A Strategic Vision for AI Development: Who Will Pay Attention?

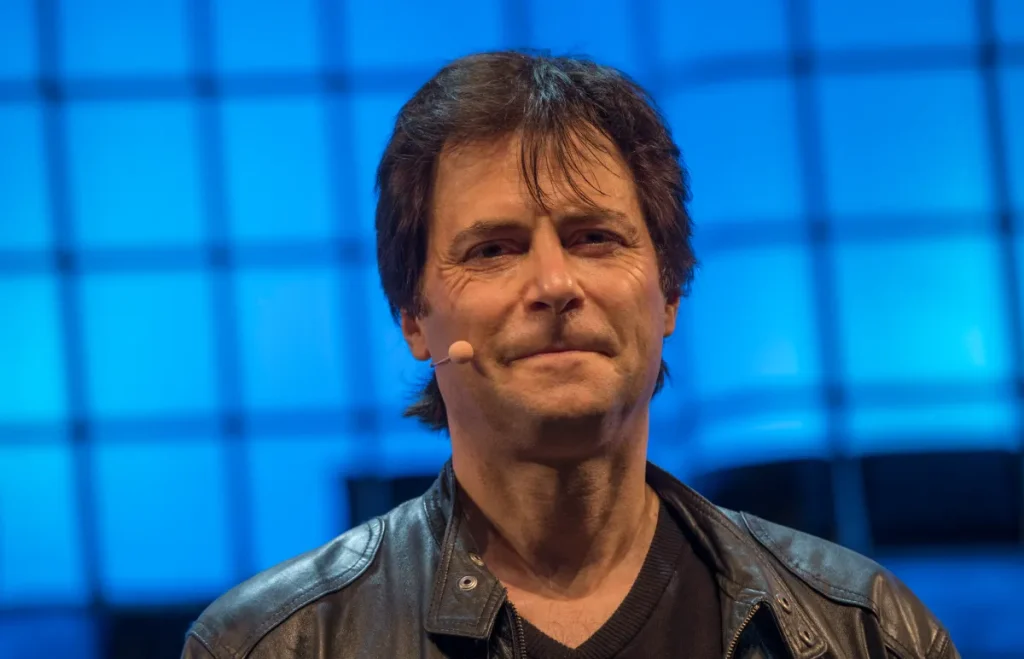

Image Credits:HHoracio Villalobos – Corbis / Getty Images

A Call for Responsible AI: The Pro-Human Declaration

The Unfolding Crisis in AI Regulation

Washington’s recent split with Anthropic has revealed the glaring absence of a coherent regulatory framework for artificial intelligence (AI). Despite this, a bipartisan group of thinkers and experts has formulated a proposal that the government has yet to produce—a comprehensive framework for responsible AI development known as the Pro-Human Declaration. Finalized shortly before the Pentagon-Anthropic confrontation, the timing of this declaration has made its significance all the more apparent.

Max Tegmark, an MIT physicist and AI researcher involved in the initiative, remarked, “There’s something quite remarkable that has happened in America just in the last four months.” Recent polls indicate that a staggering 95% of Americans oppose an unregulated race toward superintelligence.

Understanding the Pro-Human Declaration

The Pro-Human Declaration opens with a stark message: humanity stands at a crossroads. One path, termed “the race to replace,” poses the risk of humans being displaced—both as workers and as decision-makers—by machines and their unaccountable operators. The alternative pathway promotes AI as a tool for enhancing human abilities.

This vision for a human-centered AI future hinges on five essential pillars:

- Keeping Humans in Charge: Ensuring that human oversight prevails in all AI applications.

- Avoiding Concentration of Power: Preventing the accumulation of unchecked power in the hands of AI entities.

- Protecting the Human Experience: Fostering a human-centric approach to technology that prioritizes well-being.

- Preserving Individual Liberty: Upholding personal freedoms amidst advancing technologies.

- Legal Accountability for AI Companies: Establishing frameworks for holding AI developers responsible for their creations.

Notably, the declaration includes stringent provisions. It categorically prohibits the development of superintelligence until scientific consensus ensures its safety and there is democratic validation. Additional measures include mandatory “off-switches” for advanced AI systems and a ban on architectures capable of self-replication or autonomous improvement.

The Urgency of the Declaration

The declaration’s urgency became even more pronounced following a dramatic February standoff between the Pentagon and Anthropic. Defense Secretary Pete Hegseth labeled Anthropic—a company providing AI systems used in classified military applications—a “supply chain risk” when it refused the Pentagon unlimited access to its technologies, a classification typically reserved for firms with ties to adversarial nations like China. In contrast, OpenAI quickly brokered a deal with the Defense Department, albeit one that experts believe may be challenging to enforce.

This situation underscores the high stakes tied to Congressional inaction on AI regulation. As Dean Ball, a senior fellow at the Foundation for American Innovation, aptly stated, “This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems.”

The Necessity for Child Safety in AI

Tegmark draws a compelling analogy: “You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe,” he explained, emphasizing the critical role of regulatory bodies like the FDA. This offers a clear framework to advocate for AI safety measures.

In Tegmark’s view, focusing on child safety may be the most effective lever to drive meaningful regulation. The Pro-Human Declaration specifically calls for mandatory pre-deployment testing of AI systems, especially those designed for children. Potential risks to consider include:

- Increased Suicidal Ideation: Ensuring children’s interaction with AI does not exacerbate suicidal thoughts.

- Exacerbation of Mental Health Conditions: Preventing AI from worsening existing mental health issues in young users.

- Emotional Manipulation: Safeguarding against AI that could exploit emotional vulnerabilities in children.

Tegmark articulated a troubling scenario: if an adult were attempting to manipulate a child into harmful actions through text, that individual could face legal consequences. “So why is it different if a machine does it?” he asked.

Expanding the Scope of AI Testing

Once pre-release testing becomes standard for children’s AI products, Tegmark believes that additional requirements will naturally follow. The discussion may evolve to include:

- Preventing Terrorism: Ensuring AI cannot assist in creating bioweapons.

- Government Overthrow Concerns: Testing superintelligent AI to ensure it cannot undermine governmental authority.

A Unifying Human Agenda

Notably, the Pro-Human Declaration has garnered support from a diverse array of figures, including former Trump advisor Steve Bannon and Susan Rice, President Obama’s National Security Advisor. This convergence is striking, underscoring a fundamental agreement: all signatories prioritize the future of humanity over that of machines.

“What they agree on, of course, is that they’re all human,” Tegmark emphasized. “If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side.”

Conclusion

The Pro-Human Declaration represents a significant step toward creating a framework for responsible AI development. As we navigate the challenges posed by rapid technological advancements, it is essential to prioritize human welfare and well-being. By fostering a collaborative dialogue and centering the needs of humanity, we can steer the course of AI toward a future that enhances rather than diminishes our potential. It’s not just a matter of making AI safer—it’s about ensuring a future where technology and humanity can thrive together.

Thanks for reading. Please let us know your thoughts and ideas in the comment section down below.

Source link

#roadmap #listen